AI Abuse Is Already Here. Cinder Was Built to Fight It.

Glen Wise on why AI abuse is already causing real harm, and why Cinder exists to give defenders the firepower to fight back.

Today we’re announcing that Cinder raised $41 million in series B funding, led by Radical Ventures, with continued participation from Accel, Y Combinator, Neuberger Berman and additional participation from M12, Microsoft’s Venture Fund, Mozilla Ventures, and PSP Growth.

This is an exciting moment for Cinder and fundraising is an important moment for any startup, but I’d like to talk about something else.

AI is causing real harm against real people, today.

The current conversation around AI is too future looking in its focus on the potential Good and Bad: massive productivity gains, catastrophic harms; cures to cancer, AI-induced unemployment; vulnerability-free software, death of privacy. Lost in this conversation are the ways that AI is already supercharging the efforts of bad actors to attack real people every day.

AI abuse is already a very serious problem, not a potential one. Unsophisticated bad actors now have access to tools that just years ago were limited to nation-states, perpetuating abuse at unprecedented scale and speed. Non-consensual “deepfake nudes”, social engineering campaigns targeting the biggest companies in the world, fraud and scams indistinguishable from reality exploiting the elderly. The costs of these abuses on the people and businesses attacked are not hypothetical or speculative. And they are happening right now, on platforms we all use every day.

My co-founders and I worked on the front lines of digital safety at the US government, Meta, and Palantir. We have fought these threats up close, and built best-in-class solutions to stop online abuse. We started Cinder to make sure the people defending the internet have more firepower than the people attacking it.

The aggregate impact of AI abuse and manipulation has undermined trust in innovation and the technology of AI itself. A few weeks ago, I participated in a discussion at the United Nations about technology-facilitated violence against women and girls, one of the most difficult trust & safety challenges of the internet-era. It was an honor to be at the table with policy makers and law enforcement who are working together to tackle these problems head on. Everyone there aimed to prevent and mitigate the human cost of this abuse, and some participants aimed to slow technological development and deployment itself.

I do not think that is possible. And I do not think it is preferable. But I understand and empathize with the frustration of people confronting the very real human costs of this abuse.

Cinder was built to solve this problem: to prevent abuse and thereby enable innovation. Every technology creates opportunities and risks, and AI is no different. Cinder enables companies to seamlessly protect their users and thereby enables the trust and confidence necessary to unlock the creativity and ambition of our society’s most imaginative founders and business leaders.

In an ideal world, Cinder wouldn’t exist. In the real world, there are too many adversaries, and Cinder is necessary. Today's funding will accelerate our work building mission-critical infrastructure so the world’s most innovative companies can protect their product, brand, and users from abuse and manipulation.

We’re just getting started, and if you’re interested in protecting billions of people around the world, come join us.

Glen Wise

###

About Cinder

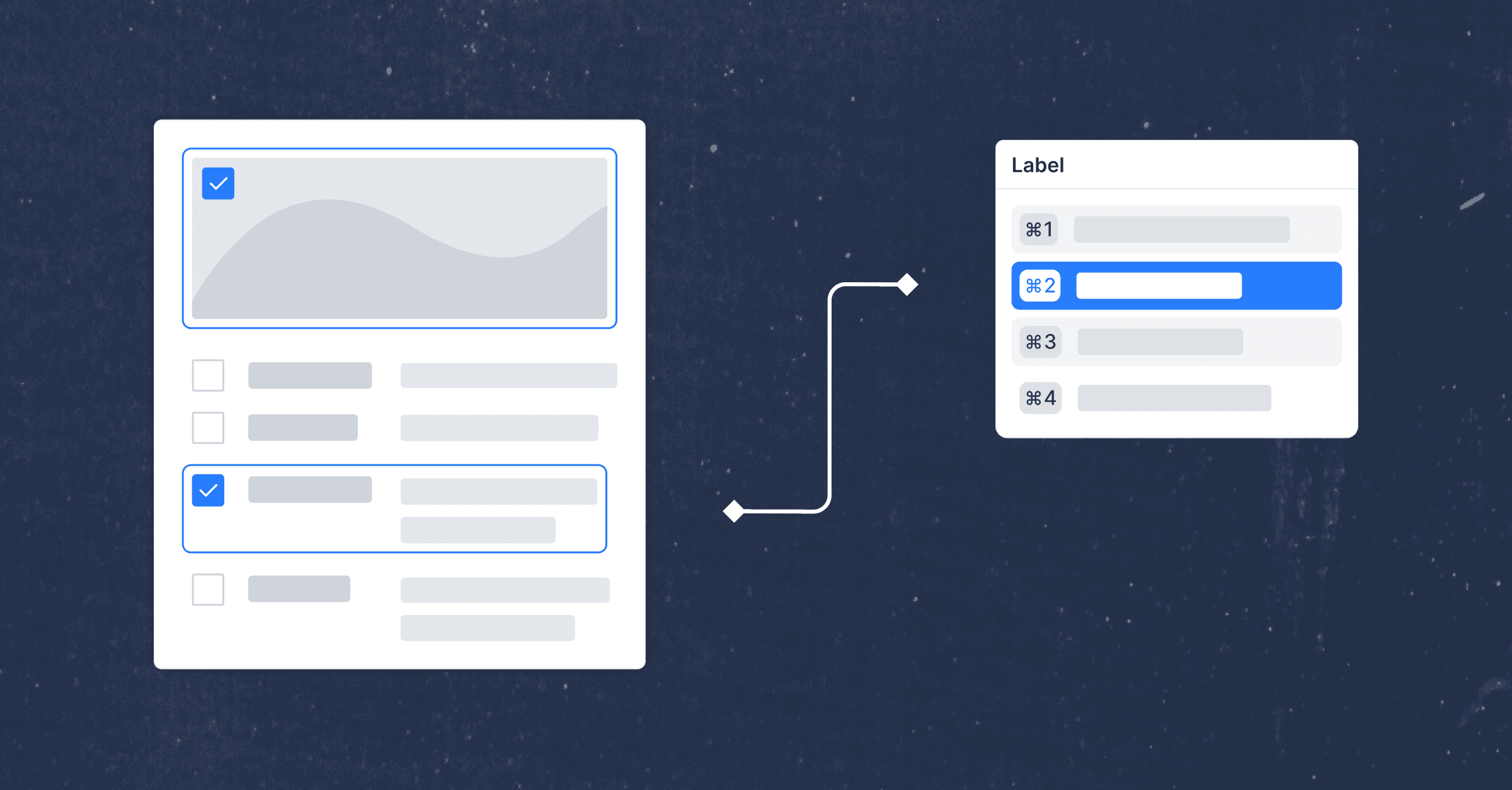

Cinder is mission-critical infrastructure protecting the world's most innovative companies from digital abuse and manipulation. Cinder unifies investigations, content review, and policy enforcement so trust and safety teams can move at the speed of the threats they face.