Insights

The EU's CSAM Failure Has Created a Moral Hazard for Lawmakers

The EU's CSAM detection gap has created an impossible operating environment for platforms, safety teams, law enforcement, and victims.

The EU's CSAM detection gap has created an impossible operating environment for platforms, safety teams, law enforcement, and victims.

Online platforms in Europe are now being asked to operate inside an impossible contradiction: remove child sexual abuse material, find more of it, move faster, but do so without a clear legal basis for some of the tools used to detect it in interpersonal communications.

That contradiction did not happen overnight. It is the result of years of legislative delay, temporary fixes, and unresolved arguments about privacy, encryption, proportionality, and platform responsibility. The EU's temporary ePrivacy derogation, introduced in 2021 to permit voluntary detection of CSAM while permanent rules were negotiated, expired on April 3, 2026. The permanent framework is still not in place.

This is not just a procedural failure. It is a moral hazard. Lawmakers can demand child safety, refuse to create a workable legal framework, and then leave platforms, trust and safety teams, law enforcement, and victims to absorb the consequences.

For companies operating trust and safety systems, none of this reads as an abstract legal debate. It is the difference between running a governed safety process and making high-stakes decisions inside a regulatory vacuum.

How We Got Here

Before 2020, major platforms used voluntary detection systems to identify known CSAM, grooming behavior, and previously unseen abuse material. Reports flowed to bodies like NCMEC and the IWF, and from there to law enforcement. The system was imperfect, but for many major services it formed a core part of the online child protection pipeline in Europe.

In late 2020, the European Electronic Communications Code reapplied the EU ePrivacy Directive to interpersonal messaging services. That created legal uncertainty for communication services using automated detection technologies, and several platforms paused or curtailed scanning in the EU as a result. The European Commission responded with a proposal for an interim regulation allowing providers to continue voluntary detection and reporting.

In July 2021 the EU adopted that interim measure as Regulation 2021/1232, the temporary ePrivacy derogation. It was explicitly a stopgap, intended to preserve detection capabilities while permanent legislation was developed. The Commission proposed that permanent legislation, the Child Sexual Abuse Regulation, in May 2022. It would have established detection, reporting, and removal obligations alongside an EU Centre on Child Sexual Abuse.

Between 2022 and 2026, the permanent regulation became stuck in political deadlock over scope, encryption, and the structure of detection orders. The temporary derogation was extended once, in 2024, to April 3, 2026. A short-lived path to a further extension collapsed in March 2026. Parliament first endorsed a temporary extension, but negotiations with Council broke down over scope, including whether the measure should exclude end-to-end encrypted communications. On March 26, Parliament voted not to prolong the interim derogation.

The EU has now run the same experiment twice. The first time, in 2020, the consequences were sharp enough that Brussels moved quickly to legislate a fix. The second time, in 2026, those consequences were predictable from the first round. Lawmakers chose to accept that risk anyway.

What I Saw Working on This at Meta

I have seen this dynamic up close. While working on child safety at Meta, I worked through the operational consequences of legal changes that affected how platforms could detect and report CSAM in Europe. What looked from the outside like a policy debate showed up internally as a series of very practical questions: what could still be reviewed, what could still be escalated, what could still be reported, and what risk the company was willing to carry while the law remained unsettled.

The issue moved quickly from legal interpretation to real-world safety impact: fewer signals, fewer reports, public criticism, and pressure from officials who still expected platforms to act aggressively within a newly constrained legal environment.

That experience revealed a deeper problem in how online safety policy gets made. When lawmakers create ambiguity, the harm does not stay abstract. It shows up in queues, reports, investigations, enforcement gaps, and missed opportunities to identify victims. Legal uncertainty becomes operational risk, and that risk eventually lands somewhere. It usually lands on the child.

The Moral Hazard

The moral hazard is not that lawmakers oppose CSAM detection. No serious policymaker wants children to be abused online. The hazard is that lawmakers can avoid making the hard trade-off, allow the legal basis for detection to lapse, and still blame platforms when abuse is missed. They can call for privacy, call for safety, and avoid owning the uncomfortable places where those commitments collide.

In practice, this pushes risk downward. Trust and safety teams inherit the ambiguity. Legal teams inherit the liability. Law enforcement quietly absorbs the drop in referrals. Victims get whatever falls out of the bottom. The political class retains the ability to claim privacy as a victory and child safety as an industry obligation, while bearing none of the cost of the gap between the two.

That is what makes this a moral hazard rather than a policy disagreement. The people whose decisions caused the vacuum are not the people who have to operate inside it.

The Privacy Concerns Are Real. The Absence of a Framework Is Not a Privacy Framework.

Any honest version of this argument has to take the civil liberties critique seriously. Detection systems can be overbroad. False positives matter. Private communications deserve stronger protection than public content. End-to-end encryption raises genuinely difficult questions. Groups like CDT Europe and EDRi have warned that broad scanning mandates can be incompatible with European fundamental rights, and those concerns should not be dismissed.

A workable regime needs necessity, proportionality, transparency, auditability, judicial oversight, meaningful safeguards against function creep, and clear limits on the detection technologies that can be deployed. Some Parliament-backed safeguards pointed in this direction: restricting detection to known and previously verified material, requiring judicial authorization, and excluding end-to-end encrypted communications from scope. That is a materially different model from broad "chat control" proposals: targeted, accountable detection with real safeguards.

But not legislating does not produce a privacy-protective system. It just leaves companies, regulators, and safety teams operating without one. The hardest decisions get pushed into private legal risk assessments inside individual companies, which is precisely where they should not sit. The legitimate critique of mass scanning should not become cover for inaction when a narrower, safeguarded regime is on the table.

What Comes Next

The practical consequences of the lapse are already visible. The safety consequences are predictable.

Legal certainty has dropped. Companies operating in Europe now face significant uncertainty over whether and how they can use proactive detection tools in interpersonal communications. Some will curtail scanning to manage risk. Some will try to construct an alternative legal basis under the GDPR or the DSA and hope a regulator does not challenge it. Neither is a substitute for a coherent regime.

Reports are likely to fall. The IWF and others have warned that when detection stops, reports fall, citing a 58 percent drop in EU-origin reports to NCMEC over 18 weeks during the 2020 disruption. That is not abuse disappearing. It is the system getting worse at seeing it.

Incentives for platforms get worse. The safest legal posture may now be to do less, document less, and limit proactive detection even where the safety case is strong. That is the opposite of what a serious child safety regime should produce.

Smaller companies are hit hardest. Large platforms have legal teams, policy teams, and bespoke safety infrastructure to navigate ambiguity. Smaller ones do not, and many will default to inaction.

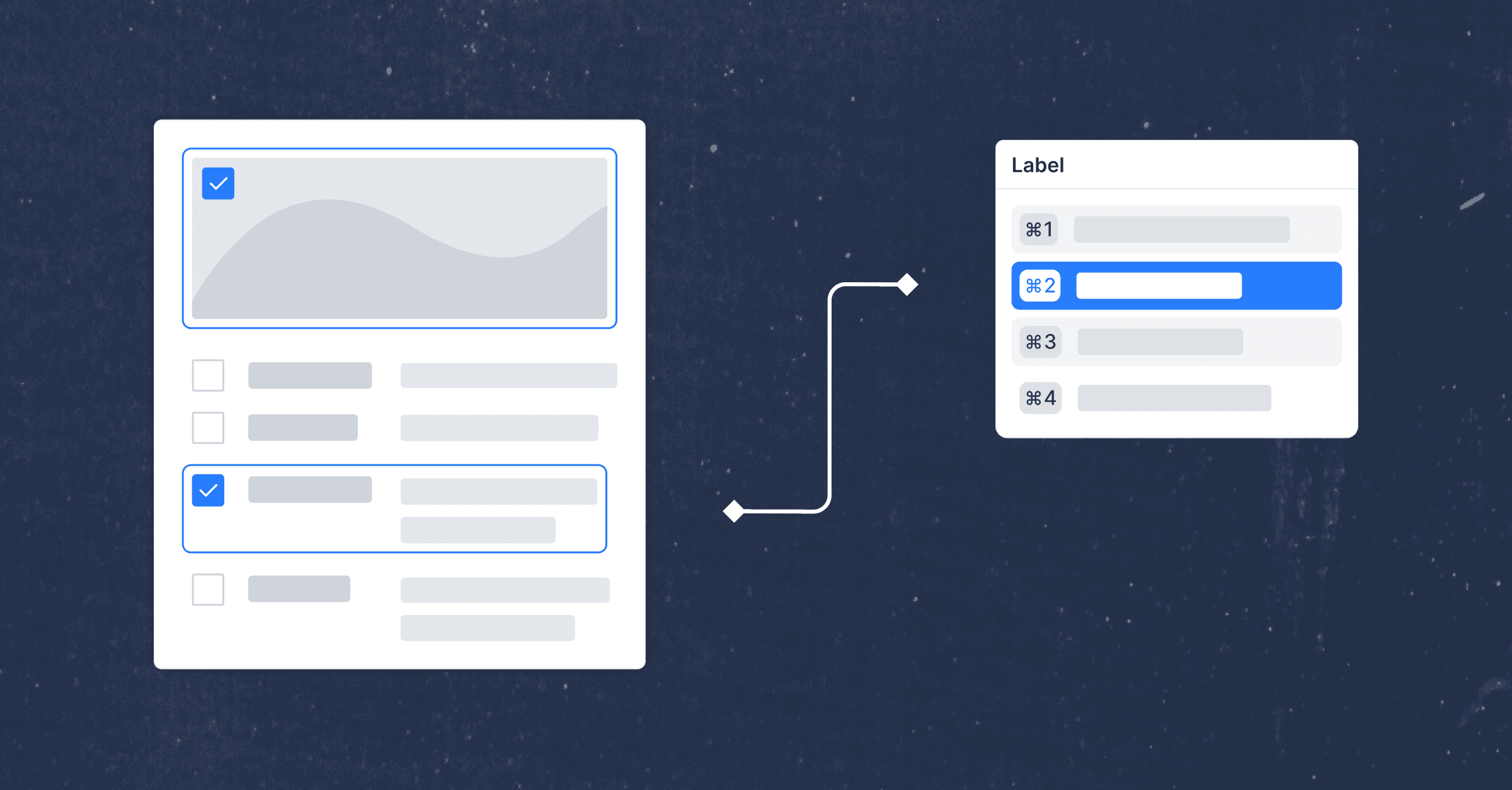

This is the pattern we see clearly at Cinder. We work with trust and safety teams across companies of very different sizes, risk profiles, and regulatory environments. One thing shows up again and again: once a policy position reaches production, slogans stop being useful. Teams need workflows, evidence, escalation paths, auditability, and room to adapt policy to operational reality.

CSAM is the clearest possible example of why online safety regulation must be implementable, not just rhetorically satisfying. Tooling can do a great deal. It can help teams enforce policy consistently, preserve evidence, manage review, and build accountable processes. But it cannot substitute for legal certainty about what platforms are permitted to do. That certainty is what the EU has just removed.

What Needs to Happen

There is still a window for the EU to produce something durable.

Parliament-backed safeguards, narrower scope, judicial oversight, and the exclusion of end-to-end encryption, should be treated as a serious basis for a permanent regime, not as a stepping stone toward something more expansive. That position is not what anyone wanted. But it is defensible, and it would restore a baseline of protection. Lawmakers who rejected it as too narrow and lawmakers who rejected it as too broad both need to account for the vacuum that followed.

The Commission should also be explicit about its enforcement posture during the gap. The current ambiguity is the worst possible outcome: platforms that do less protect themselves legally, and platforms that do more are exposed. Public clarity on whether good-faith detection of known CSAM under existing frameworks will draw enforcement action would meaningfully reduce harm in the interim.

And the conversation needs to stop treating inaction as neutral. It is not. The 2020 episode established the counterfactual. Every week the EU operates without a clear legal basis is a week in which referrals fall, identification of victims slows, and perpetrators get a quieter operating environment. That is a policy choice. It should be defended as one.

A Closing Thought

Privacy and child safety are not mutually exclusive values. But reconciling them requires lawmakers to do the hard work: define lawful detection, set strict safeguards, require transparency, create audit mechanisms, and give platforms clear obligations rather than contradictory expectations.

What Europe has created instead is a legal vacuum. And in that vacuum, everyone can claim to care about child safety while no one owns the consequences of inaction.

Children deserve better than temporary derogations. Platforms need more than political blame. And lawmakers should not be allowed to outsource the hardest moral choices in online safety while reserving the right to criticize everyone else for the outcome.

Sam Freeman is Founding Forward Deployed Engineer at Cinder and an MSc candidate in the Social Science of the Internet at the Oxford Internet Institute. He previously worked as a technical lead in the Integrity group at Meta, where he built perceptual hash matching technologies to address CSAM, NCII, and terrorist content.