Insights

The Safety Features Bad Actors Love Most

Courts are starting to treat product design as the source of platform harm. Brian Fishman argues online safety needs a more sophisticated model for adversarial tradeoffs.

Courts are starting to treat product design as the source of platform harm. Brian Fishman argues online safety needs a more sophisticated model for adversarial tradeoffs.

The most dangerous capabilities in Trust and Safety aren't the ones bad actors develop. They're the tools we build to stop them.

That's the lesson I keep returning to after courts in New Mexico and Los Angeles recently found social media companies liable for harms facilitated on their platforms. Both cases rested on a novel theory: that the design of the platforms themselves, not just the content posted on them, enabled the harm. Advocates have celebrated the rulings. I understand why. But I think these decisions were sloppy, and they will do more harm than good.

What the Courts Actually Decided

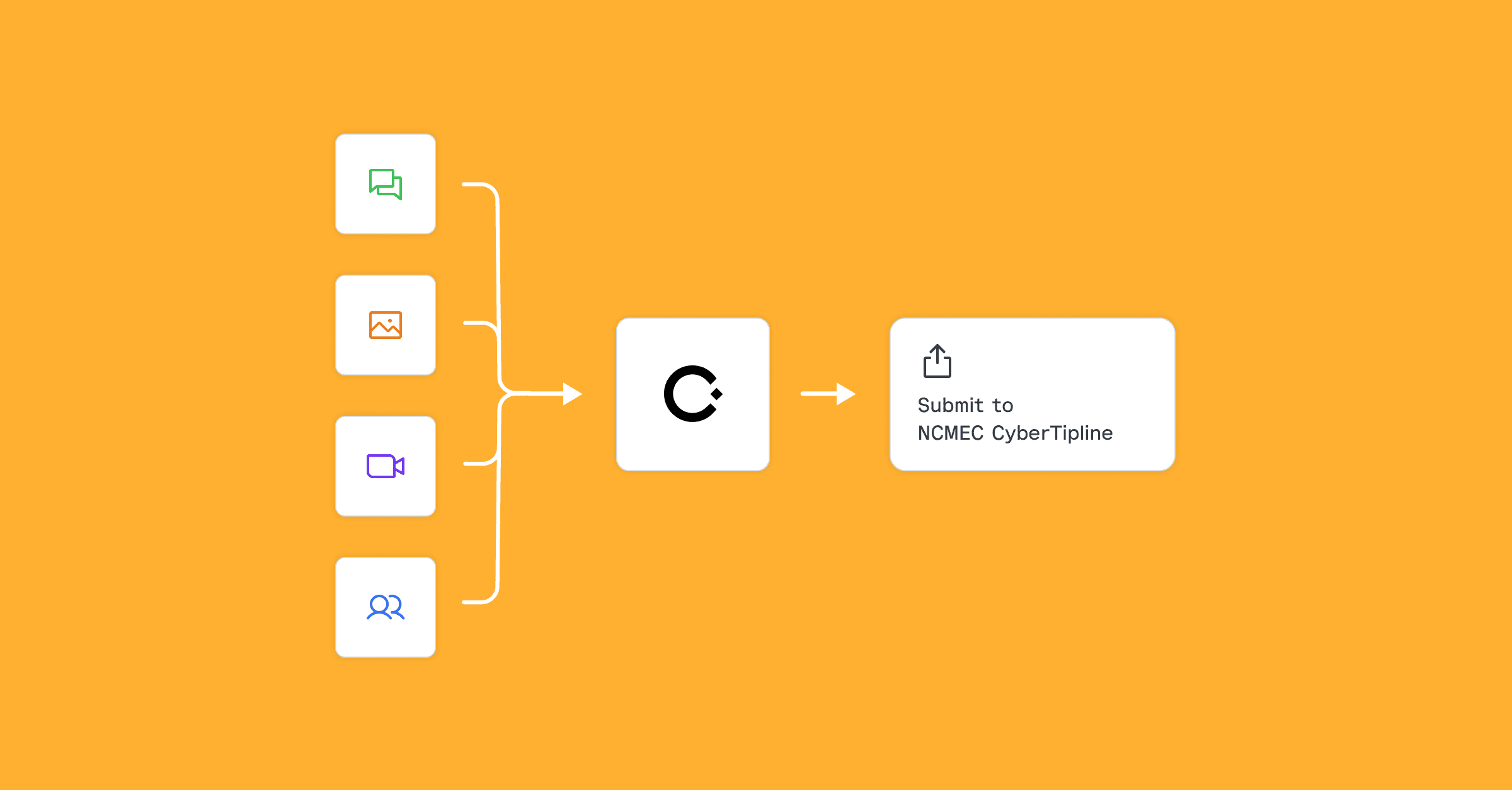

In the New Mexico case, the state Attorney General sued Meta for failing to protect children and secured a $375 million fine. The AG cited internal documents arguing that product choices, including the development of encrypted messaging on Facebook and Instagram, prevented identification of child sexual abuse material (CSAM) and inhibited referrals to the National Center for Missing and Exploited Children (NCMEC).

In the Los Angeles case, Meta and YouTube were ordered to pay $6 million to a woman identified as K.G.M., who alleged that addictive product design contributed to her depression and dangerous anxiety. TikTok and Snap settled before trial.

Both cases hinge on an idea designed to get around First Amendment speech protections and Section 230 of the Communications Decency Act, which largely shields platforms from liability for the speech posted on them and from the implications of their efforts to moderate content. The theory: product design choices, such as encrypted messaging or algorithmic feeds, do not constitute speech. Therefore, platforms can be held liable for them if it can be shown that the features themselves contribute to harm.

Why This Is More Complicated Than It Looks

Product choices have real safety implications. But they are rarely straightforward, and they almost never cut in one direction.

Take encryption. I spent years at Meta arguing against encrypting Messenger and Instagram Messages. My reason: it would undermine our ability to identify and disrupt terrorist planning. The New Mexico case essentially vindicated that concern, arguing that encryption reduced Meta's ability to surface CSAM and make referrals to NCMEC.

But encryption also protects people from government surveillance, private sector data collection, and stalkers. Signal, iMessage, and WhatsApp have been end-to-end encrypted for years. Does this ruling mean they can be held liable for harms they are structurally unable to detect?

The jury instructions in the LA case asked only whether product design was a "substantial factor" in K.G.M.'s struggles. Under that standard, virtually any design choice might qualify, depending on the individual and the circumstances. Not just features widely considered risky, like algorithmic recommendations and infinite scroll. Private messaging, not just encrypted messaging, is often a vector for far more serious abuse than public posts. Could a platform be held responsible for enabling private communication of any kind? It would be ridiculous, but the New Mexico ruling fails to provide a clear answer as to why not.

The Problem These Cases Ignore

Here's what years of Trust and Safety work taught me: the most acute harms almost always surface in the features designed to protect people or give them privacy. Private groups. Direct messages. Encrypted messages. Bad actors colonize community spaces and exploit them for recruitment, propaganda, and harassment, but the worst of the worst generally happens out of sight in more private spaces.

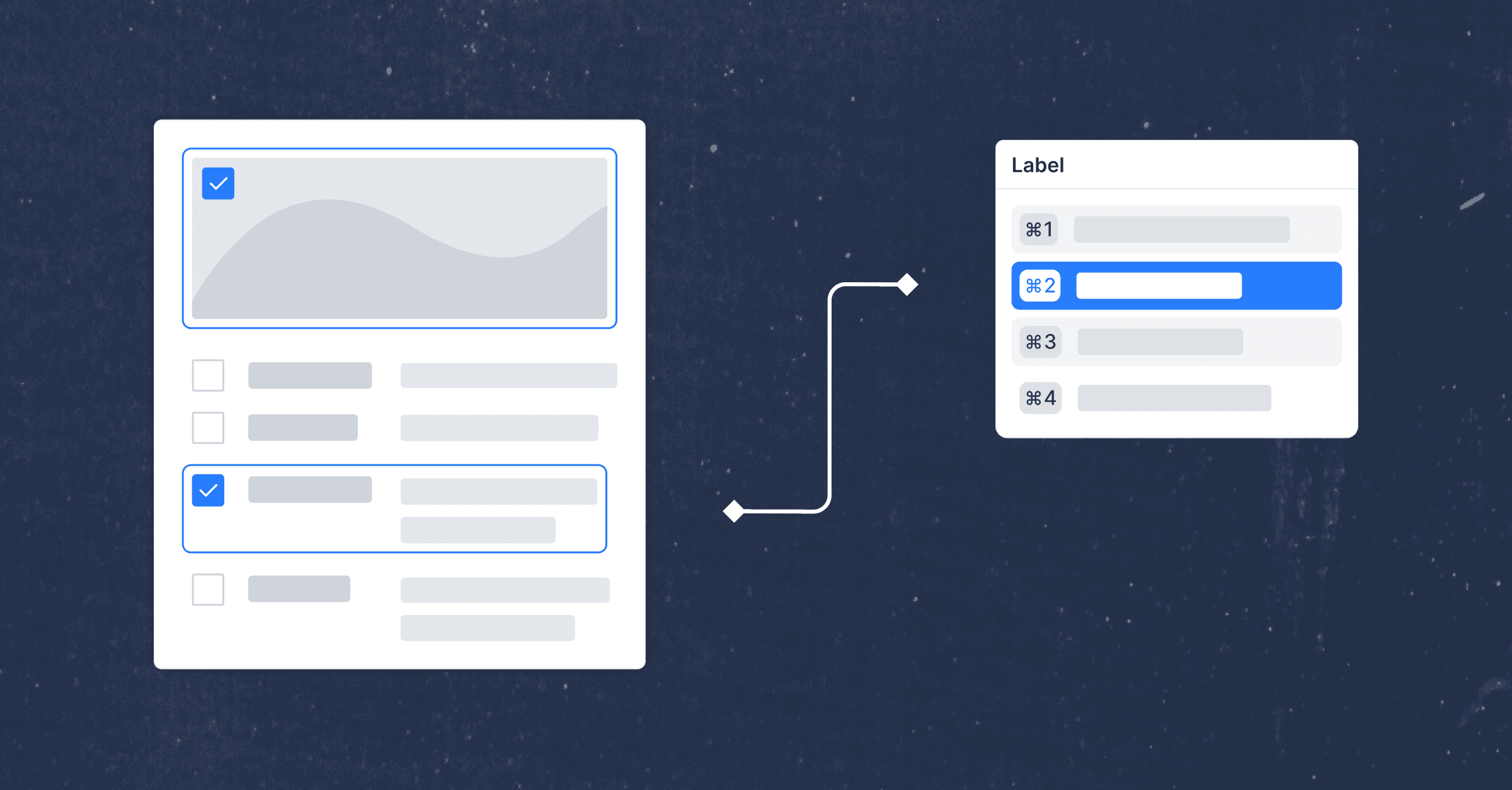

T&S work will beat the assumptions out of you. When I arrived at Meta with a mandate to disrupt the Islamic State's abuse of the platform, I assumed user reports were an underutilized signal for identifying harm. They weren't. Turns out that user reports of terrorist content were a terrible way to actually identify terrorist content. Users did not understand what content actually violated the rules or pursued personal or political agendas by reporting content they disliked as "terrorism". A seemingly straightforward feature, now required by the DSA, designed to protect users and give them voice became another vector for abuse.

I am not defending the particular features at issue in these court cases. But I know first-hand that well-intentioned but imprecise efforts to keep people safe online backfire. And these rulings are imprecise in ways that will backfire by disincentivizing safety research at platforms, incentivizing unmerited lawsuits, and creating a cause for action against platforms that implement features like encryption that bring numerous benefits even if they also carry costs.

What Just Rulings Would Actually Require

The New Mexico and Los Angeles juries reached a conclusion that is emotionally correct: platforms enabled harm, even without intending it. Platforms that profit while facilitating serious harm to children and young people deserve scrutiny and accountability. There is certainly no reason to feel bad for Meta.

But holding platforms liable based on product design choices, without accounting for the genuine safety tradeoffs those choices involve, doesn't make the internet safer. It pushes companies toward decisions that look defensible in court rather than decisions that actually reduce harm. Would society be better without private digital spaces? Of course not, yet those features undoubtedly create more risk of abuse than other features.

The law has always struggled to keep pace with how technology works, including Trust and Safety. These cases illustrate that courts need a much more sophisticated model for evaluating platform decisions, one that accounts for the adversarial nature of the problem, the tradeoffs inherent in every design choice, and the fact that mechanisms built to protect people are often the next surface bad actors aim to abuse.

Brian Fishman is co-founder of Cinder and former Director of Dangerous Organizations at Meta. He previously led research at West Point's Combating Terrorism Center and is the author of The Master Plan: ISIS, al-Qaeda, and the Jihadi Strategy for Final Victory (Yale University Press).