Insights

A Night with Cinder: What It Actually Takes to Fight Synthetic NCII

At Cinder's Shaping Safer AI Systems event, Alexios Mantzarlis and Chris Grigg laid out the policy and engineering reality of fighting synthetic NCII at scale.

Cinder hosted experts in the tech and policy fields for Shaping Safer AI Systems, an event at the company's New York City Headquarters in Soho, where speakers discussed what it actually takes to detect, respond to, and stay ahead of SNCII at scale.

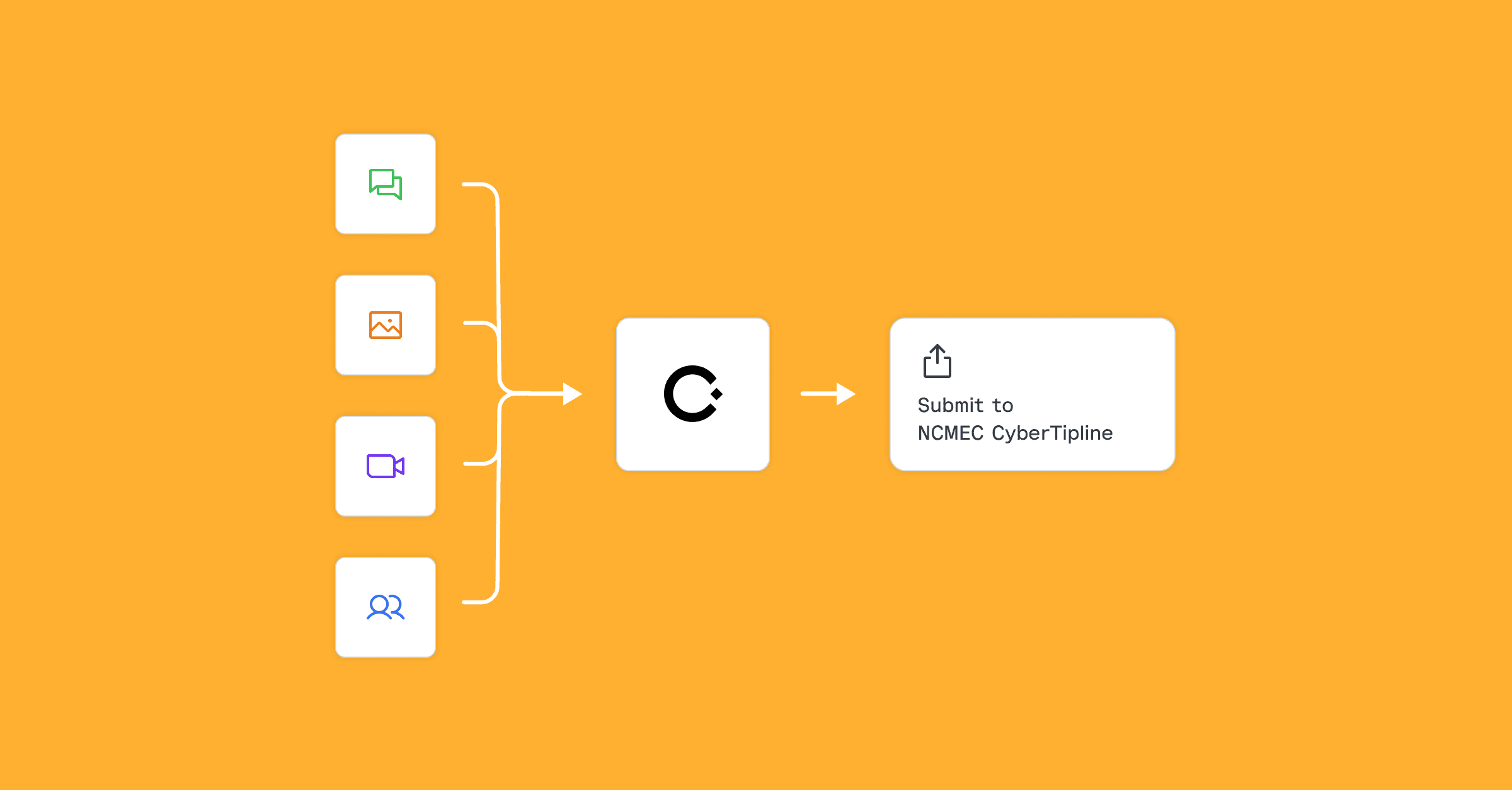

Alexios Mantzarlis of Cornell Tech has spent years researching gender-based violence and AI-generated harm, while Cinder's software engineer Chris Grigg works daily on the AI systems that detect and respond to abuse at scale. Together, they gave the room something rare: a clear-eyed view of the threat, grounded equally in policy reality and engineering reality.

"This isn't a hard problem," Mantzarlis told the audience. "It's a problem the industry has chosen not to solve."

The numbers back him up. UNICEF estimates approximately 1.2 million children may have been targeted in school-based incidents alone. Reporters around the world have documented almost 100 cases of these tools being used to create non-consensual intimate imagery inside school communities. Ads for nudification tools ran in the tens of thousands on major consumer platforms. Some of the worst offenders benefit from Google Single Sign-On, others are still available in app stores today. The infrastructure enabling the abuse isn't hidden but runs on the same platforms, hosting providers, and ad networks that power legitimate businesses.

Mantzarlis organized his talk around three levers: deplatforming the tools of abuse, building better detection technology, and deterring users through education. None of them is a complete solution on its own, but together they represent a realistic map of where meaningful progress is possible right now.

On deplatforming, his argument was direct: a coordinated decision by a handful of major infrastructure providers could eliminate the vast majority of the harm. Australia, the EU, and California have moved in this direction. The US federal TAKE IT DOWN Act criminalized the distribution of synthetic NCII and requires platforms to remove it within 48 hours. Mantzarlis's view: welcome, but insufficient. The content doesn't travel the way the law assumes. It spreads on disappearing messages and phones passed around in classrooms.

Meanwhile, Grigg presented the ground-level reality of building AI systems that fight harm in production. The marketing around AI, he argued, obscures how hard this actually is. Deploying the most capable frontier model doesn't solve the problem. The most powerful models tend to overthink, miss latency requirements, and optimize for the data they were trained on rather than the problem at hand. Attackers are using the same models with lower standards and no accountability, and they move fast.

What works is more disciplined: tight iteration on input signals, honest calibration of model uncertainty, and human escalation paths designed from the start. Not as a fallback, but as a core feature. The adversaries adapt constantly, and the platforms winning are the ones built to move with them.

"It's easy to build agents that fit the data instead of the problem," Grigg said. "The cost of getting that wrong, at scale, is real."